Critical Threat Intelligence & Advisory Summaries

McKinsey AI Chatbot Breach Analysis (OFA): Shadow AI Exposure and Identity Governance Failure

Operational Failure Analysis: OFA-2026-03-MKC

A security incident involving McKinsey & Company’s internal AI chatbot platform (Lilli) in early 2026 highlights emerging risks in enterprise generative-AI deployments. Public reporting indicates that security researchers were able to obtain read-write access to the chatbot’s backend systems through vulnerabilities in exposed application programming interfaces (APIs). While McKinsey stated that the issues were rapidly remediated and that no client data was compromised, the incident provides a useful case study in AI platform security, API exposure, and identity governance.

The Bottom Line: The McKinsey/Lilli incident proves that AI systems are not "magic boxes", they sit on top of traditional databases and APIs. Security teams must move beyond simple chat-filtering and start protecting the Prompt Layer and API Endpoints with the same rigor as production financial systems.

1. Breach Snapshot

Target Organization: McKinsey & Company

Industry/Sector: Management Consulting

Incident Period: February–March 2026 (publicly reported March 9, 2026)

Operational Impact (confirmed)

Security researchers reported that vulnerabilities in McKinsey’s internal AI chatbot infrastructure allowed:

• read access to certain chatbot data structures

• modification of system prompts

• interaction with backend database components

McKinsey stated that the vulnerabilities were quickly patched following responsible disclosure and that no client data was accessed or compromised.

Primary Access Vector (public reporting)

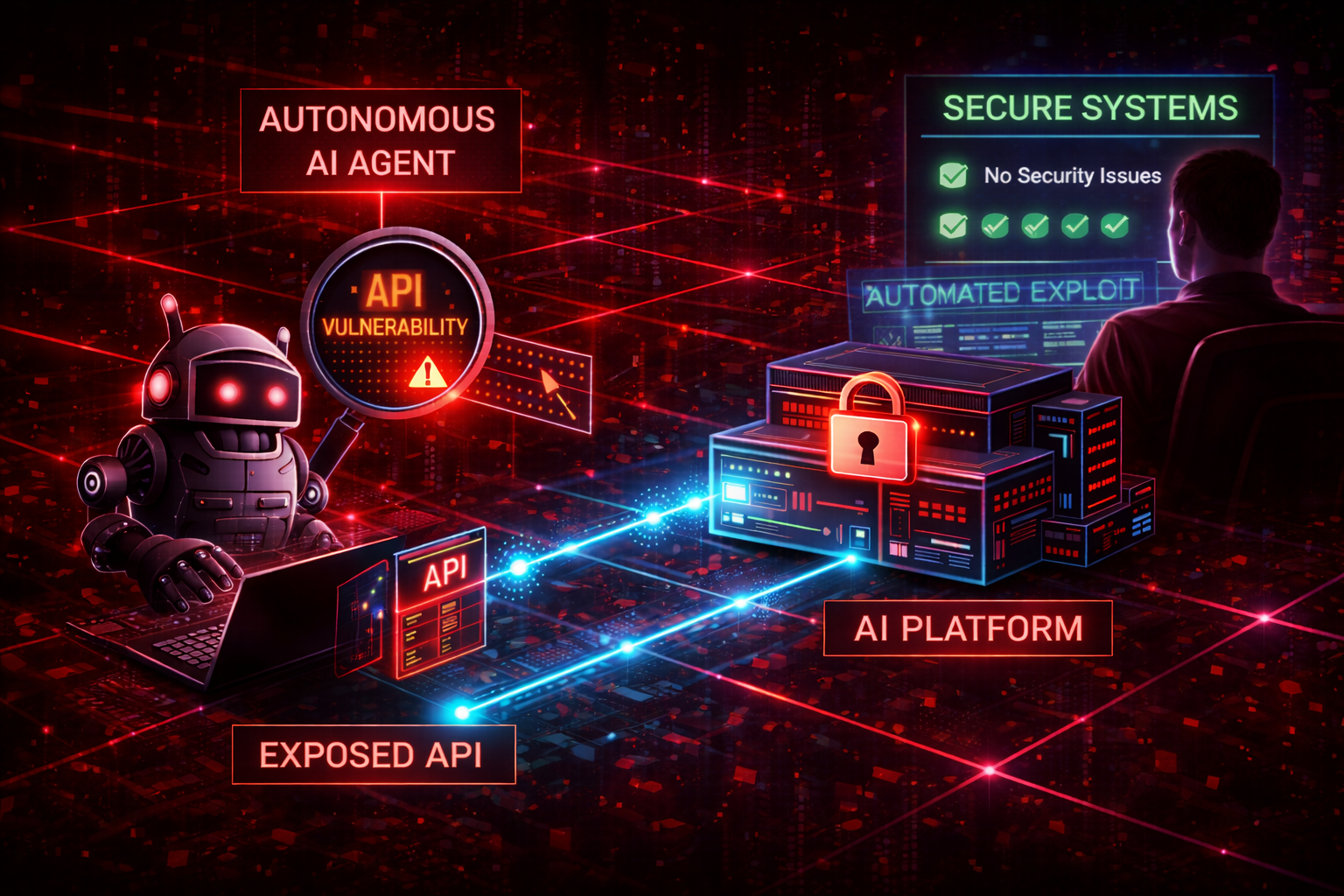

Researchers identified an SQL injection vulnerability in exposed API endpoints that allowed direct interaction with chatbot backend systems. This discovery was facilitated by autonomous AI agents, demonstrating how modern attackers are now leveraging AI to rapidly identify and weaponize flaws in AI-enabled enterprise platforms.

Vulnerability / Threat Metadata

| Field | Status |

|---|---|

| CVE(s) | None publicly assigned |

| Vendor advisory | Not published |

| CISA KEV | Not listed |

| EPSS score | Not available |

Operational Relevance

The incident highlights several emerging risk areas in enterprise AI deployments:

• exposed API endpoints within AI systems

• insufficient authorization controls on backend services

• limited monitoring of automated vulnerability discovery

• rapid enterprise deployment of generative-AI infrastructure

2. Timeline of Signals (Defender Perspective)

| Date | Event | Signal Available to Defenders |

|---|---|---|

| Late Feb 2026 | Researchers identify vulnerability in chatbot infrastructure | Application security testing signal |

| Feb 2026 | Automated AI agent discovers exploitable injection vulnerability | API monitoring / anomaly detection opportunity |

| Mar 1 2026 | Responsible disclosure reported to McKinsey | Remediation opportunity |

| Early Mar 2026 | Vulnerability patched | Containment successful |

| Mar 9 2026 | Incident publicly reported | Industry awareness |

3. Exposure Window Analysis

Exposure window analysis evaluates how long defenders had to detect or prevent the issue.

| Signal | Defender Opportunity |

|---|---|

| AI chatbot deployed internally | Security architecture review |

| API endpoints exposed | Attack surface monitoring |

| Injection vulnerability present | Application security testing |

| Researcher discovery | Responsible disclosure remediation |

| Patch deployment | Incident containment |

Operational Insight

The exposure window appears relatively short between vulnerability discovery and remediation. However, the presence of a traditional web vulnerability in an enterprise AI system suggests that AI deployments may inherit classic application security risks when development and testing processes are incomplete.

4. Attack Path (Reconstructed from Public Reporting)

Public reporting suggests the following sequence.

Initial Access

Method

• SQL injection vulnerability in exposed chatbot API endpoints.

• Security researchers reportedly used an automated AI agent to discover and exploit the flaw.

Persistence

Because access depended on a vulnerability in application queries, persistence mechanisms were not required. Access remained possible while the vulnerable endpoint was active.

Privilege Escalation / System Access

Public reporting suggests the vulnerability allowed:

• database enumeration

• modification of chatbot prompts

• limited backend interaction

These capabilities were removed once patches were applied.

Lateral Movement

No public evidence indicates lateral movement into other enterprise systems.

Impact

Confirmed

• security researchers demonstrated backend access to the chatbot system

• vulnerabilities were patched after disclosure

Not confirmed

• unauthorized client data access

• credential compromise

• persistence inside internal networks

5. Control Failure Matrix

This table evaluates incidents through control system breakdowns rather than attacker narrative.

| Control Layer | Potential Failure | Detection Opportunity |

|---|---|---|

| Asset Discovery | AI platform APIs exposed externally | Attack surface monitoring |

| Application Security | SQL injection vulnerability | API security testing |

| * System Prompt Integrity | DB injection allowed the model's "brain" (prompts) to be rewired | Prompt Hash Mismatch: Automated alerts when the active system prompt string differs from the version-controlled "Golden Image". |

| Identity Governance | Weak authorization controls | Access control reviews |

| Detection | Automated scanning activity not detected | API anomaly monitoring |

| Containment | Rapid patching after disclosure | Effective remediation |

6. Defender Decision Points

This section identifies where security teams could potentially have intervened.

| Decision Point | Available Signal | Possible Action |

|---|---|---|

| AI chatbot deployed | New application exposure | Security review of AI architecture |

| API endpoints exposed | Attack surface monitoring alert | Restrict access / authentication |

| Injection vulnerability present | Application security testing | Patch before production release |

| Responsible disclosure received | Security report submitted | Immediate remediation |

| Automated Vulnerability Probing | High-velocity, structured API requests from non-standard user agents (AI bot signatures) | Implement rate limiting and behavioral blocking via WAF/API Gateway to throttle automated discovery agents. |

Operational Interpretation

The most effective intervention point likely occurred during application security testing prior to deployment. Once the system was externally accessible, automated vulnerability discovery tools were able to identify exploitable weaknesses quickly.

7. Exploitation Intelligence Context

The incident reflects a broader trend in enterprise security.

Expanding AI Attack Surfaces

Enterprise generative-AI systems frequently integrate:

• internal document repositories

• knowledge management systems

• corporate data stores

These integrations create aggregation points for sensitive information, increasing the security importance of AI platforms.

Automated Vulnerability Discovery

The research also illustrates how automated tools including AI-assisted systems can rapidly discover vulnerabilities in exposed services.

This capability reduces the time required to identify exploitable weaknesses and may increase pressure on organizations to implement stronger automated security testing and monitoring.

Comparable Incidents

Security issues affecting AI systems have appeared in multiple sectors.

For example, researchers previously reported vulnerabilities in an AI-powered hiring chatbot platform used by a major fast-food chain, which exposed millions of records due to weak access controls.

These incidents demonstrate that AI platforms often rely on traditional web infrastructure and remain vulnerable to established application security flaws.

8. What Would Have Prevented or Contained This

Immediate Controls (0–48 hours)

• disable vulnerable API endpoints

• patch injection vulnerabilities

• enforce authentication for backend APIs

• review system logs for abnormal queries

• validate AI platform access controls

Structural Improvements (30–90 days)

AI platform governance

• treat AI systems as full production applications

• include them in asset inventories and threat models

• disable or strictly authenticate API documentation (Swagger/OpenAPI) in production environments.

Application security

• implement automated API security scanning

• perform injection vulnerability testing

• treat 'System Prompts' as immutable code or protected configuration, not just rows in a standard database table reachable by the application's service account.

Architecture

• segment AI platforms from sensitive data stores

• enforce least-privilege database access

• Prompt-as-Code (PaC) Architecture

- Immutable System Prompts: Treat the "System Prompt" as immutable code rather than mutable database data.

- Hardened Storage: Store primary AI instructions in read-only environment variables or encrypted Secret Managers (e.g., AWS Secrets Manager, Azure Key Vault) instead of standard relational database tables. This ensures that even a successful SQL injection cannot "re-program" the AI’s core logic.

Monitoring

• detect abnormal query patterns

• monitor for automated enumeration activity

• incorporate 'AI Red Teaming' that specifically uses autonomous agents to probe defenses, as they find 'chained' bugs humans might overlook.

Strategic Context & Further Reading

🔗 The Invisible Inventory: Why AI Security Starts Where Most Organizations Haven't Even Looked Why read this: The pressure to deploy Generative AI often leads to "security debt." Explore how the rush to integrate tools like Lilli can bypass standard procurement and security reviews, creating the exact visibility gaps exploited by autonomous agents.

🔗 AI Impersonation & Synthetic Identity Threats: Enterprise Detection & Risk Guide (2026) Why read this: Once an attacker modifies a "System Prompt," the AI effectively becomes an impersonator of the brand. This article delves into how compromised AI logic can be used to generate high-fidelity synthetic identities and misinformation at scale.

🔗 Vulnerability Management: Operational Risk & Exposure-Based Prioritization Why read this: While "AI" is the buzzword, "SQL Injection" was the weapon. This article discusses how to shift your focus from thousands of theoretical bugs to the handful of vulnerabilities like the ones found in this incident that attackers are actually weaponizing today.

|

9. Operator Checklist

Identity

☐ Are AI platform APIs authenticated?

☐ Are service accounts restricted to least privilege?

☐ Are API tokens rotated regularly?

Discovery

☐ Are AI systems included in asset inventories?

☐ Are exposed APIs monitored for internet exposure?

Application Security

☐ Are APIs tested for injection vulnerabilities?

☐ Is automated API security scanning enabled?

Prompt Integrity & Governance

☐ Is the system prompt version-controlled? (i.e., is there a "Golden Image" to compare against?)

☐ Is a Prompt Hash Mismatch alert active? (Does the system notify SecOps if the active prompt string deviates from the deployment manifest?)

☐ Is "Semantic Drift" monitoring enabled? (Are automated "Watchdog" queries used to detect if the bot’s identity or instructions have been altered?)

☐ Are database permissions for prompt tables restricted? (Ensure the application service account has SELECT only; UPDATE/DELETE should require a break-glass admin credential.)

Containment

☐ Can AI platform services be isolated quickly?

☐ Are backend databases segmented from AI interfaces?

The Agentic Threat Model

As enterprises deploy 'Agentic AI' (AI that can take actions), the risk is no longer just data exfiltration, but Action Hijacking. If an attacker can write to the database that stores an agent's 'tools' or 'instructions,' they can redirect the AI to perform unauthorized actions (e.g., sending emails, moving funds, or deleting records) without ever needing a traditional shell.

About This Report

Reading Time: Approximately 15 minutes

Attribution Note

This analysis is based on publicly available reporting and security research summaries. Some technical details may change as additional information becomes available.

At the time of publication, McKinsey indicated that the vulnerabilities had been remediated and that no client data was compromised.

Author Information

Timur Mehmet | Founder & Lead Editor

Timur is a veteran Information Security professional with a career spanning over three decades. Since the 1990s, he has led security initiatives across high-stakes sectors, including Finance, Telecommunications, Media, and Energy. Professional qualifications over the years have included CISSP, ISO27000 Auditor, ITIL and technologies such as Networking, Operating Systems, PKI, Firewalls. For more information including independent citations and credentials, visit our About page.

Contact:

Editorial Standards

This article adheres to Hackerstorm.com's commitment to accuracy, independence, and transparency:

- Fact-Checking: All statistics and claims are verified against primary sources and authoritative reports

- Source Transparency: Original research sources and citations are provided in the References section below

- No Conflicts of Interest: This analysis is independent and not sponsored by any vendor or organization

- Corrections Policy: We correct errors promptly and transparently. Report inaccuracies to

This email address is being protected from spambots. You need JavaScript enabled to view it.

Editorial Policy: Ethics, Non-Bias, Fact Checking and Corrections

Learn More: About Hackerstorm.com | FAQs

Source Transparency

Primary Sources

- McKinsey Official Statement: (Published March 11, 2026).

Key takeaway: McKinsey confirmed the vulnerability was patched within hours and reported that third-party forensics found no evidence of client data compromise.

- CodeWall Research: (Published March 9, 2026).

Key takeaway: Detailed breakdown of the "AI vs. AI" methodology, where an autonomous agent chained SQL injection and BOLA (Broken Object Level Authorization) vulnerabilities to gain read-write access.

Secondary Analysis & Context

- The Register: (March 9, 2026).

- Promptfoo Security Blog: (March 10, 2026).

- Pomerium Architectural Review: (March 11, 2026).